What technologies are trending today?

The following IT technologies are predicted to keep progressing in 2023 based on current trends. The IT landscape is constantly changing, and new technologies may appear while others may become obsolete.

Based on current trends, the following IT technologies are likely to continue to gain momentum in 2023. It's essential to note that the IT landscape is continually evolving, and new technologies may emerge, while some may fade away.

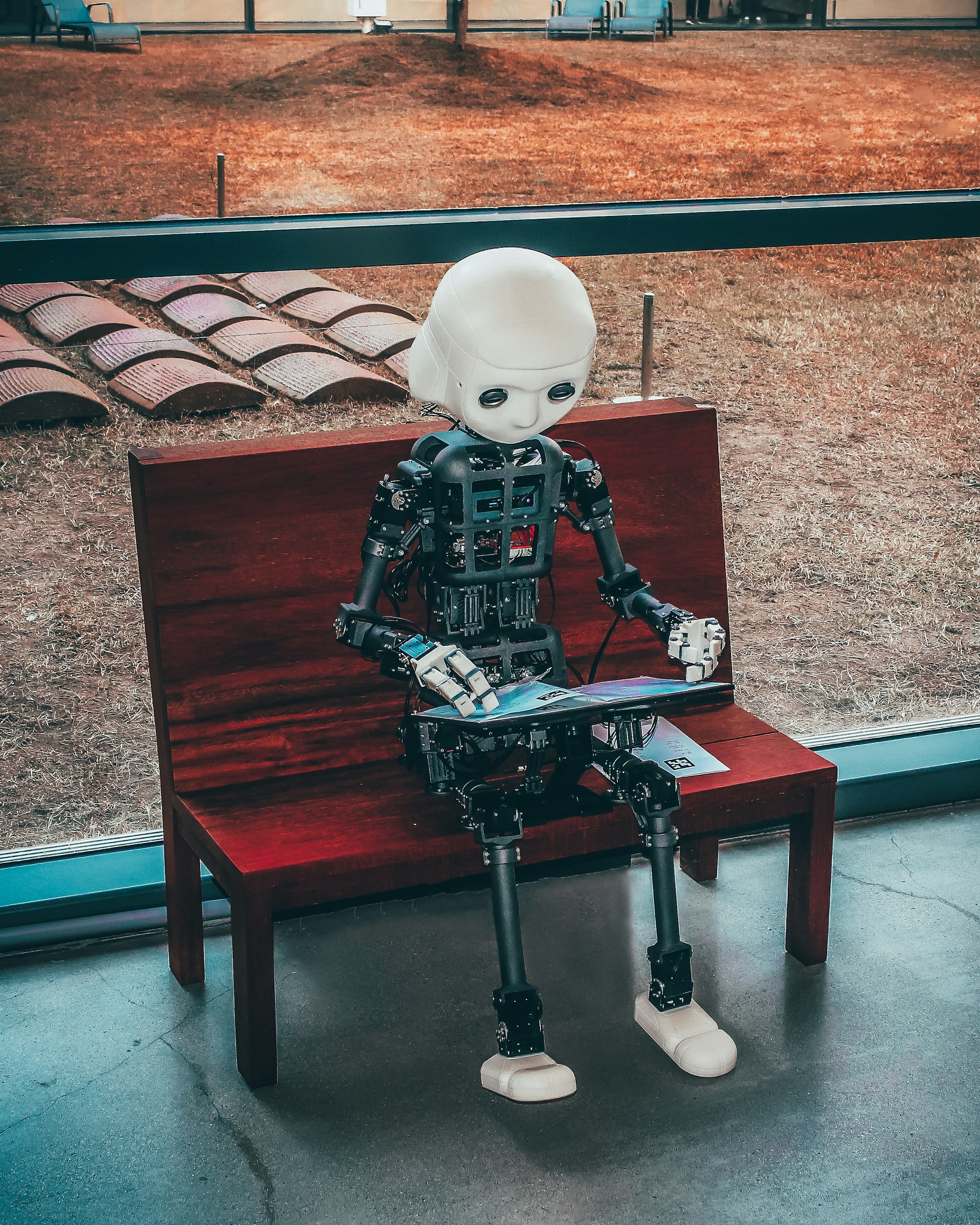

Artificial Intelligence and Machine Learning

Though they are closely linked, the terms artificial intelligence (AI) and machine learning (ML) should not be used interchangeably. While ML is a subset of AI that employs algorithms to automatically gain insights and spot patterns from data, AI is a larger notion that refers to computers' ability to mimic human cognition and behavior.

Photo by Possessed Photography / Unsplash

The origins of AI and ML may be traced back to the 1950s, when Alan Turing developed the Turing Test to gauge the intelligence of machines. Two significant developments in recent years have made ML the primary driver behind the advancement of AI.

Many sectors have embraced ML to automate repetitive activities and provide creative insight. Marketers have also taken advantage of the potential, using it for customer segmentation and predictive analytics.

Cybersecurity and Privacy

Privacy and cybersecurity are becoming more and more intertwined as more personal data is processed or kept online. The term "cybersecurity" refers to the steps taken to guard against unwanted access by hackers, whereas "data privacy laws" refer to the procedures taken to safeguard individuals who share their data with businesses. Due to the potential financial losses that can result from cyberattacks and the privacy threats posed by data breaches, both cybersecurity and privacy have grown to be key concerns for businesses.

Photo by GuerrillaBuzz Blockchain PR Agency / Unsplash

Companies must take precautions to safeguard their data, including putting in place strong cybersecurity strategies and abiding with data privacy legislation. Organizations should also make an effort to include privacy principles into the directions of their cyber security policies and promote a wider discussion about cyber security that recognizes its significance for privacy, trust, and responsible data stewardship.

Internet of Things (IoT) and Smart Home Technologies

Smart home and Internet of Things (IoT) technologies are becoming more and more integrated in our daily lives. IoT stands for Internet of Things, and smart home technology is the capacity to operate household appliances using electronic, network-connected devices. IoT and smart home technology combine in homes to give homeowners a more smooth and easy experience, even from a distance.

Photo by Sebastian Scholz (Nuki) / Unsplash

Control and monitoring, cost and energy savings, environmental impact, improved security, and comfort are a few advantages of IoT smart home technologies. IoT and smart home applications include voice controls for in-floor heating and lighting systems, remote access to appliances like refrigerators and washing machines, and automated security systems using motion sensors or facial recognition cameras.

The Internet of Things (IoT) will continue to influence how we live in the future due to the ongoing progress of smart home technologies and their decreasing cost.

Cloud Computing and Hybrid Cloud

A hybrid cloud is a computing system that combines on-premises infrastructure, private clouds, and public clouds to produce a single, adaptable, and economically advantageous IT architecture. It enables businesses to utilize their current on-premises infrastructure while gaining access to the advantages of both public and private clouds.

Workload portability and agility across all cloud environments are provided by hybrid cloud, and the deployment of those workloads is automated. Additionally, it enables businesses to apply many of the same security precautions found in their on-premises infrastructure. A hybrid cloud that incorporates public cloud services from multiple cloud service providers is known as a hybrid multicloud.

In general, hybrid cloud gives businesses the option to flexible and cost-effectively optimize their IT infrastructure while still having full control over their data and apps.

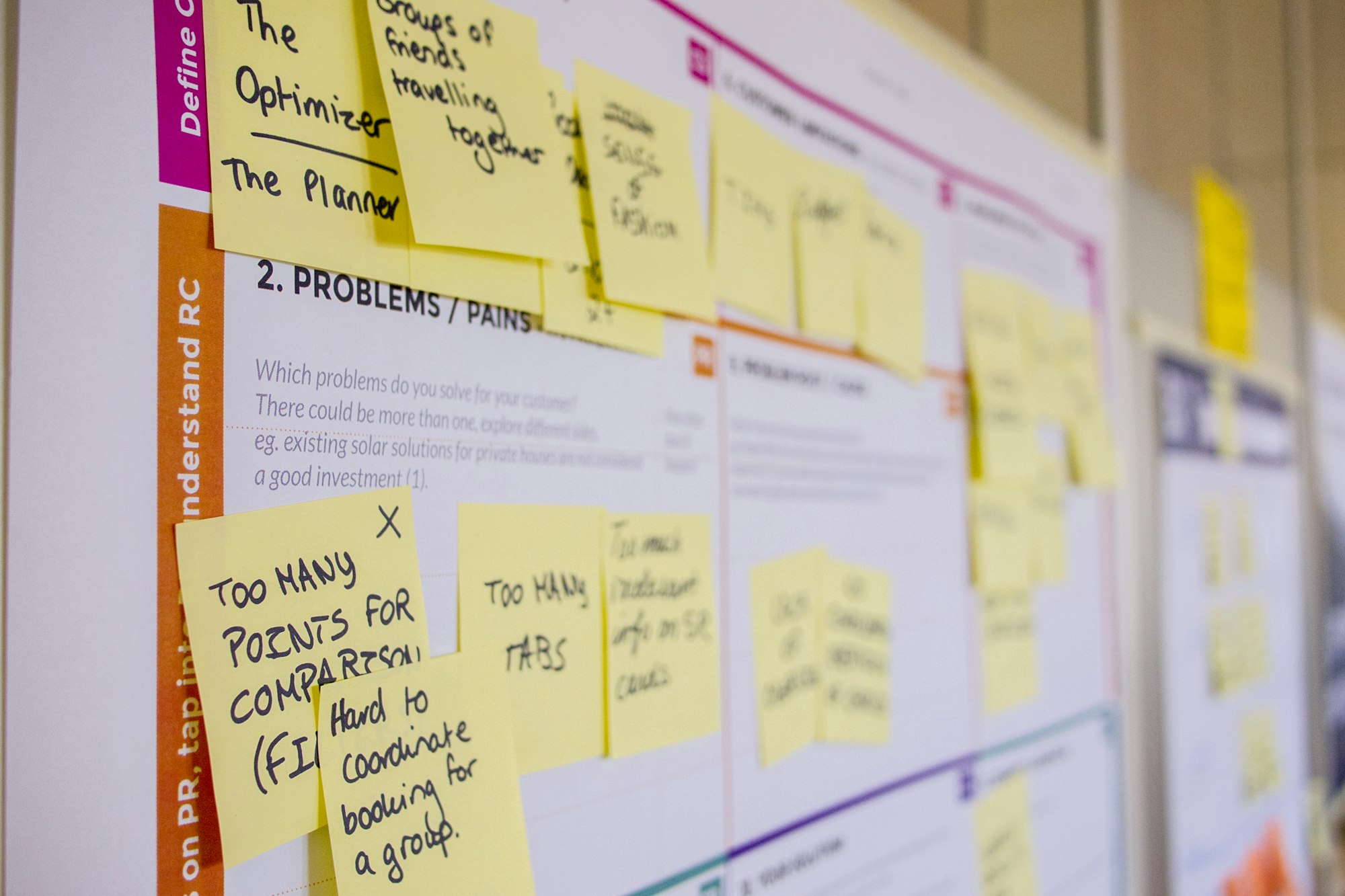

DevOps and Agile Development

Getting the final product out as soon and effectively as feasible is a goal shared by DevOps and Agile, two software development approaches. DevOps is a method of software development that empowers teams to use agile concepts and techniques to build, test, and deliver software more quickly and reliably. While DevOps involves the operations team to enable continuous delivery, Agile focuses on improving the productivity of developers and development cycles.

DevOps and Agile integration benefits the development lifecycle, increases developer and product management collaboration, focuses on test and delivery automation, gives developers structure for planned work, incorporates unplanned work into the process, automates workflow processes, and enables frequent releases.

Photo by Daria Nepriakhina 🇺🇦 / Unsplash

DevOps and Agile both provide a structure and framework that can hasten the delivery of software. DevOps and agile can both be used by organizations; there is no requirement for them to pick one over the other.

Blockchain and Cryptocurrency

A shared database called a blockchain stores information in blocks that are subsequently connected using encryption. It is most famously employed with digital currencies that utilize blockchain networks, including Bitcoin and Ethereum. Blockchains are decentralized, which means they may function without a centralized authority.

Beyond cryptocurrencies, blockchain technology has a wide range of useful uses, including simplifying supply chains, enhancing the quality of medical records, and conducting research. Due to their dispersed structure, they also have benefits like improved security and transparency.

Photo by Shubham Dhage / Unsplash

Blockchain technology is used by Bitcoin, a type of digital currency, and Ethereum, a platform for decentralized apps and smart contracts. A website that enables customers to purchase, sell, and exchange cryptocurrency with confidence is Blockchain.com.

Quantum Computing

The study of technologies based on the concepts of quantum theory is the focus of the field of computer science known as quantum computing. It uses quantum mechanics to address issues that are too complicated for conventional computers. Quantum computers use multidimensional spaces to connect discrete data points and are designed for complexity. In comparison to supercomputers, they are smaller and use less energy. A quantum processor is a wafer that is about the size of a laptop.

Qubits, which are particles capable of existing in several states simultaneously, are used in quantum information processing. Compared to traditional bits, which can only exist in two states, they can encode more information as a result (0 or 1). With the addition of more qubits, the power of computing is exponentially improved because to quantum superposition and entanglement.

Although quantum computing has numerous potential advantages, including machine learning, artificial intelligence, and optimization, it also has several drawbacks. For instance, environmental noise and other factors make it challenging to keep qubits in the desired state. The development of quantum computers is still in its infancy, therefore it may be some time before they are generally accessible.

Edge Computing and Fog Computing

Both edge computing and fog computing are technology platforms that move computing operations closer to the locations where data is produced and gathered. Fog computing occurs at a greater physical distance from the sensors than edge computing, either on the sensors themselves or on a gateway device adjacent to the sensors. Both technologies execute computations often done in the cloud while keeping data closer to its original location.

Fog computing is a compute layer that sits between the edge and the cloud that takes data from edge layers and can process it before sending it to the cloud. Edge computing can process data for business applications and communicate the outcomes of these operations to the cloud. Fog computing offers scalability as a result of the large number of data points it collects, but it may be more expensive as a result of the requirement for both edge and cloud solutions.

Fog computing has intelligence situated between the edge and cloud, whereas edge computing has intelligence situated where data is generated. Autonomous vehicles, which use edge servers connected with cameras and sensors on the vehicle to process data in milliseconds, are examples of edge computing. Fog computing examples include embedded programs on assembly lines that measure temperature every second using temperature sensors linked to edge servers.

Augmented Reality (AR) and Virtual Reality (VR)

Virtual reality (VR) and augmented reality (AR) are two emerging technologies that are revolutionizing the way we interact with displays. Unlike VR, which is entirely virtual, AR leverages a real-world environment. While VR users are under the system's supervision, AR users have some influence over their presence in the actual world. AR can be accessed with a smartphone, whereas VR needs a headgear device. In contrast to virtual reality, augmented reality (AR) improves both the actual and virtual worlds.

While VR transfers viewers to a virtual world that they can explore, AR enables users to view the reality around them with digital visuals superimposed on it. Several AR headsets, such as the Microsoft HoloLens and the Magic Leap One, are presently on the market. Additionally, there are apps that employ AR on gadgets like smartphones and laptops without the need for a headset.

Photo by Bram Van Oost / Unsplash

The use of VR and AR technology is expanding quickly, and many industry experts believe this trend will continue. These innovative, developing technologies provide up countless commercial and job options; by 2022, it is anticipated that the AR and VR industry will reach $209.2 billion. Software engineers, game designers, 3D animators, UX/UI designers, product managers, marketers, data scientists, hardware engineers, content creators, project managers, business analysts, and other professionals are in high demand as they work to develop and improve VR and AR technology.

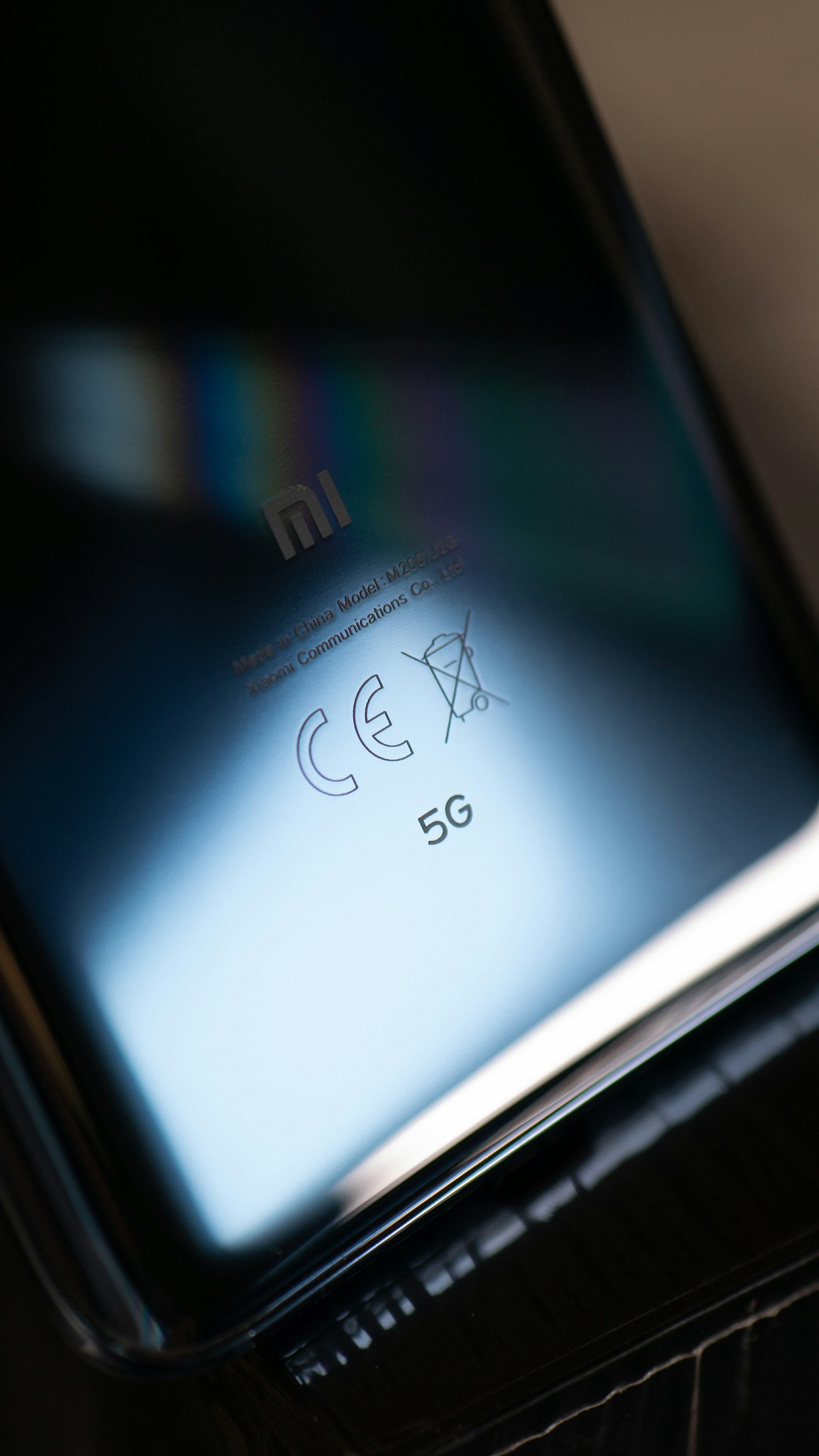

5G and Wi-Fi 6

Both Wi-Fi 6 and 5G are wireless technologies that provide boosted network speeds, low latency, and higher bandwidth. Wi-Fi 6 is a variation of WLAN technology, whereas 5G is a cellular technology. The license, authentication, use cases, and communication between the two technologies vary.

While Wi-Fi 6 only operates locally inside buildings, 5G offers extensive regional coverage. They work together to fill any wireless service gaps across large campuses and urban regions thanks to their overlapping footprints. End users have additional options when 5G and Wi-Fi 6 are deployed together since they can quickly switch between the two depending on their location, security needs, or access rights.

Private 5G and Wi-Fi 6/6E are complementary technologies that can co-exist and perform better when used in tandem to enable various use cases. Private 5G complements Wi-Fi by offering extremely wide coverage (mostly outdoors) in a separate spectrum with up to three times the bandwidth of Wi-Fi 4, enabling more devices to connect simultaneously. Additionally, it provides Wi-Fi 4 with less lag during video conference sessions because to its higher throughput.

Overall, 5G and Wi-Fi 6 provide the best of both worlds when combined, providing quick, seamless, and secure connectivity that enables users to transit back and forth and enter and exit buildings with ease.